Hypothetical Document Embeddings (HyDE): Smarter Retrieval in RAG

I'm a dedicated and curious engineer with a strong passion for building technology that makes a difference. My journey in tech began with a deep interest in web development, which has grown into hands-on experience in full-stack development using modern frameworks and best practices. In addition to web development, I actively explore the fields of Web3 and cybersecurity. I enjoy learning about blockchain technologies, smart contracts, and decentralized applications—constantly seeking new ways to apply them in real-world scenarios. I believe in continuous learning, clean code, and solving real problems with thoughtful design and secure solutions.

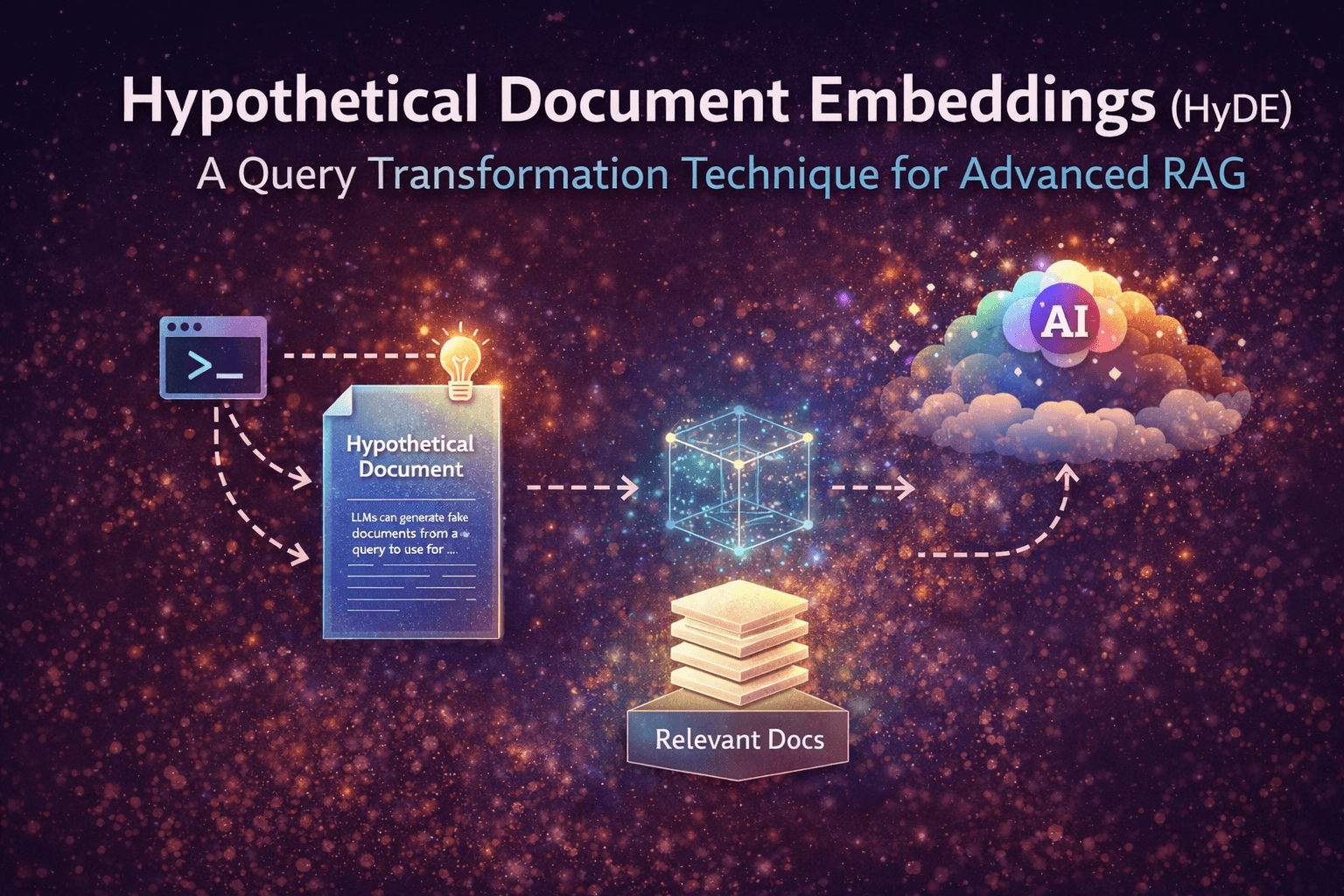

Most RAG systems work like this: Take user query → convert to embedding → search → generate answer

But here’s the issue: User queries are often too short, too vague, and missing context.

And because of that, retrieval is not always accurate. So what if, instead of searching with a weak query

We first expand it into a rich document?

That’s exactly what HyDE (Hypothetical Document Embeddings) does.

What is HyDE?

HyDE is a retrieval technique where we:

Generate a hypothetical document from the user query

Convert that document into embeddings

Use it to search for better context

Where does HyDE fit in RAG?

A typical RAG pipeline:

Indexing → Store documents as embeddings

Retrieval → Find relevant data

Generation → Produce answer

Here, HyDE improves the retrieval step

Instead of Query → Search, we do Query → Generate document → Search

How HyDE Works (Step-by-Step)

Step 1: Generate a Hypothetical Document

We use the LLM’s internal knowledge to expand the query:

Step 2: Convert to Embeddings

Step 3: Perform Semantic Search

Since the input is rich, retrieval becomes: more aligned, more meaningful

Step 4: Generate Final Response

Now the model answers using: original query, high-quality retrieved context

Why HyDE Works So Well?

In normal conditions, the RAG, the query is short, which has weak embeddings and average retrieval.

In HyDE, the generated doc is rich, so there is strong embedding and better retrieval.

Essentially, we are expanding the query, adding the hidden context, and improving semantic matching.

When Should You Use HyDE?

When queries are too short or vague, the domain is complex, retrieval quality is inconsistent.

Final Thought

RAG is not just about storing embeddings.

It’s about how you search.

HyDE shifts the thinking from:

“Search what user said” to “Search what user meant.”

If you found this useful, I write simple blogs on:

GenAI Systems, backend engineering, system design

Follow along to catch more.